-

×InformationNeed Windows 11 help?Check documents on compatibility, FAQs, upgrade information and available fixes.

Windows 11 Support Center. -

-

×InformationNeed Windows 11 help?Check documents on compatibility, FAQs, upgrade information and available fixes.

Windows 11 Support Center. -

- HP Community

- Desktops

- Business PCs, Workstations and Point of Sale Systems

- HP Z800 with GTX 1060 (6GB), 72GB RAM, Dual 5650 Hexcore whe...

Create an account on the HP Community to personalize your profile and ask a question

12-19-2018 05:09 PM

Hi,

I have a HP Z800 with GTX 1060 (6GB), 72GB RAM, Dual 5650 Hexcore with the Standart power supply.

While the machine runs generally fine I feel that the GTX 1060 potential does not get used to the max.

I am doing primarily Architecture visualisation with Rhinoceros and V-Ray.

V-Ray can use GPU power.

Still performance is not much higher than with the old Quadro 4000 I had before.

So I wonder if there is a bottleneck somewhere. Do I need to upgrade the PCI bus? Can this be done? Would anything else help?

If you suggest parts, please point me to the exact partto buy.

Please be not too tech in your answer.

Ideally I'd like to be able to run Lumion (www.lumion.com) as well.

Thanks for any suggestions to get this bird to its fullest potential.

Prettypicgirl

12-19-2018 06:00 PM

no, you can not change the pci bus, this is based on hardware not software

and since you have not actually provided any information on just why you think your nvidia 1060 card is slow, nobody is going to be able to provide any usefull reply's other than to recomend you post in the rhino/v-ray forums your cad related questions rather than this forum as both pieces of software are not HP programs which HP sells or directly supports

all i can state is that if the cad packages your mention use the quadro openGL commands then yes it might match the 1060 which does not support the enhanced openGL command set that the quadro line uses

12-19-2018 06:44 PM

Thanks.

I am asking here, because I am willing to modify or upgrade the hardware to make components work better together.

Never thought or claimed that HP creates that software.

I simply ask if there is a way to make the GTX work better with the Z800 hardware.

I am aware that the Z800 was initially designed for Quadro cards.

To V-Ray and Rhino it makes no difference if I use Quadro or GTX. The GTX is more cost effective and I do not need the higher stability or security of the Quadro anyway.

The GTX is running, it's just not much faster, but it is generation's ahead of the old Quadro 4000. So it should be much faster and there is likely a bottleneck on the hardware side somewhere.

Might be PCI, might be the Xeon, no idea.

The HP machine is great but a bit dated by now. Still at this moment I do not have the funds available to replace it.

12-20-2018 06:43 AM

please reread my comments....................................

"I simply ask if there is a way to make the GTX work better with the Z800 hardware."

and again i point out that asking his question on the rhino/V-Ray forums will most likely provide better replies that this HP forum since HP does not offer or support either of these programs and as such your chances of fing users of thes programs on this forum are rather slim

To V-Ray and Rhino it makes no difference if I use Quadro or GTX.

Rhino, begs to differ and specifically recomends quadro cards over consumer cards even though it will work with both

https://simplyrhino.co.uk/support/hardware-operating-system/rhino-for-windows

from the above link:

We generally recommend NVIDIA graphics cards as these, particularly the workstation class Quadro cards, are well proven with Rhino.

and v-ray also recomends Quadro (depending on your usage needs)

However, there are certain times where you may want to use a Quadro card. First, they are slightly more reliable since they are designed to operate under heavy load for extended periods of time and tend to have more stable drivers. Second, Quadro cards tend to have more onboard memory. The amount of video RAM will limit how complex of a scene can be rendered in V-Ray RT, so for extremely detailed rendering you may need the additional VRAM on high-end Quadro cards.

https://www.pugetsystems.com/recommended/Recommended-Systems-for-V-Ray-199

again, posting your questions in the forums of both v-ray and rhino will most likely get you the amswers you asre asking

12-23-2018 06:49 PM

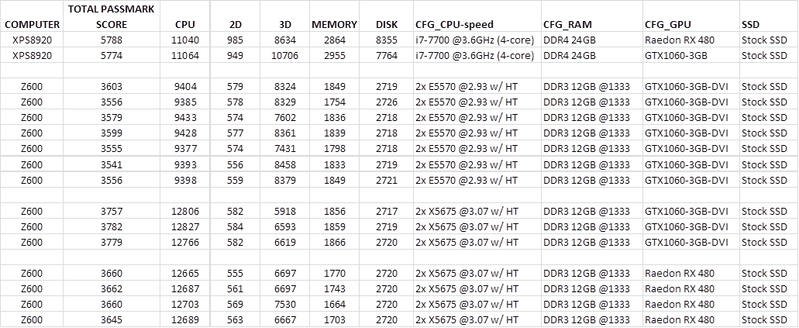

I will chime in and say that I also have the same observation of the GTX1060 being bottlenecked by something in my Z600. I'll add to the discussion my log over the past few months of Passmark v9 scores (over 2 different systems, and two different Z600 CPUs)

4 sections:

I. Benchmarks of a stock Dell XPS8920 with the stock RX480 it came with, and then with my GTX1060. Note the GTX1060 performs very well (10,000+ 3D GPU score).

II. The Z600 then gets the GTX1060 and the 3D GPU score drops (and is kinda flakey with a bimodal distribution around 8,300 and 7500).

III. Later, I upgraded to twin X5675 hex cores. CPU score is much better - however GTX1060 score acutally DROPPED (e.g., to 6,600) compared with the 3D scores on the "slower" E5570's...

IV. I decide to pull the Raedon RX480 from the Dell and put it in the Z600... Similar 3D GPU scores...

I will admit, I think Passmark might have some issues, but that is another topic... The only thing I would hypothize is that possibly the speed/performance of the SSD and the RAM contribute to the GPU performance in the Z600?

So I would also be interested if there is a logical explination for this... I've pretty much given up on trying to make the GTX1060 benchmark well on the Z600, but open to suggestions... In the BIOS I've tried enabling Enhanced Intel Turbo Boost (helped), and disabling Power Management (negligible effect)...

Thoughts?

FWIW, PassMark Performance Test is here, if the OP is interested:

https://www.passmark.com/products/pt.htm