-

×InformationNeed Windows 11 help?Check documents on compatibility, FAQs, upgrade information and available fixes.

Windows 11 Support Center. -

-

×InformationNeed Windows 11 help?Check documents on compatibility, FAQs, upgrade information and available fixes.

Windows 11 Support Center. -

- HP Community

- Desktops

- Business PCs, Workstations and Point of Sale Systems

- Z600 HDD replacement on LSI adapter

Create an account on the HP Community to personalize your profile and ask a question

03-05-2019 03:33 AM

Hello,

On my Z600 station, I have one logical drive setup as a LSI SAS type IME array with 3 hard drives

LSI Config Utility setup states that one HDD has drive status "MISSING" (broken?) on this RAID array. Station is working normaly on 2 other HDDs but whole RAID array has status "DEGRADED". Can somebody help me with:

1. how to find out which drive is broken? (I'm not feeling comfortable just randomly unpluging one drive after another and checking if it is online in BIOS, bacuase I don't know if it can cause RAID array colapse.

2. If I actually find out which one is broken and just replace it (plug it) on LSI adapter, will my RAID array be automatically rebuilt without losing a whole logical drive integrity? or is there any procedure on how to make such a replacement?

Thank You.

03-06-2019 06:27 AM

i would note the slot 1 and 2 drive model numbers, then power off the system and then trace the data cables to the motherboard SAS/SATA port that each one uses doing so will show you which port is number One and Two

knowing this, it's then quite easy to determine SAS/SATA port number three on the motherboard and follow the data cable to the third drive which should be the one that is not showing

please read this HP link on replacing a failed raid drive in the Z series workstation line

03-08-2019 01:23 AM

Finding HDD should do  Thank You for advise.

Thank You for advise.

But as for the procedure I must admit I saw it earlier and actually I'm not sure it is scoping my problem. To be exact, procedure is stating anly about RAID 1 and 0.......nothing about RAID 1E (in LSI settings it is called IME). RAID 1 is a mirror and is quite simple in construction and procedure says You can do RAID 1 rebuilt using NVIDIA tool. RAID 1E configaration is a little more complicated  and the question is will NVIDIA tool rebuild my 1E array alike type 1 array. Is it the same?

and the question is will NVIDIA tool rebuild my 1E array alike type 1 array. Is it the same?

Thanx

03-08-2019 05:44 AM

i am well aware of what raid 1E is, but for those who do not here is a explanation

Most Raid 1E implementations treat arrays with more than two disks differently, creating a non-standard RAID level known as RAID 1E. In this layout, data striping is combined with mirroring, by mirroring each written stripe to one of the remaining disks in the array. Usable capacity of a RAID 1E array is 50% of the total capacity of all drives forming the array; if drives of different sizes are used, only the portions equal to the size of smallest member are utilized on each drive.

One of the benefits of RAID 1E over usual RAID 1 mirrored pairs is that the performance of random read operations remains above the performance of a single drive even in a degraded array

raid recovery on the LSI 1068e chip is the same as for normal Raid-5 power off, replace the failed drive and power on and if nessary tell the LSI controller to start a rebuild/check of the array that had the failed drive replaced

04-15-2019 05:39 AM

Well, it is not that simple on LSI CARD just to replace the drive because there is no such option in LSI BIOS to force IME rebuild 😞

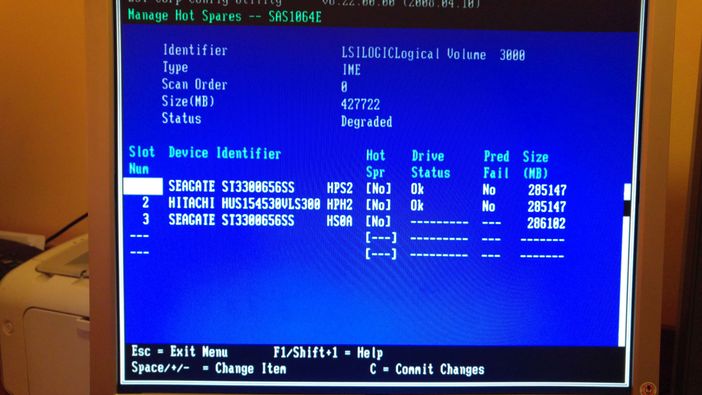

Ok what I managed to do so far. Firstly I replaced failed HDD by inserting identical seagate model to the same LSI port. Unfortunately LSI didn't see it at all. I figured it out that LSI card has 4 ports and maybe one port failed, so I pluged the old HDD drive to this one spare port and voila it appeared on LSI list but with status "wrg" so it seems it failed anyway along with LSI port to which it was pluged. When I pluged the new HDD to the spare LSI port it ofc appeared on the list with no errors but it didn't solve my problem just like that. Like I said LSI BIOS doesn't give You any "force rebuild" option. The only solution is to apply this new HDD as a hot spare and then RAID will automatically rebuild itself. And this is where I stuck right know......when I enter "menage hot spares" manu LSI doesn't enable me option to add this HDD as a hot spare ( [NO] in "hot spr" column should be coloured) and I don't know why. HDD which I'm replacing is almost identical as the previous one (size is even a little bit larger as You see on a picture, HDD nr 3 ). Can someone give me a clue on how to enable this hot spare option? On logical IME drive I use alomost 400GB of space right know, maybe this is the reason? I have no idea....

04-15-2019 08:41 AM

you can not add a hot spare to a failed array on most controllers because the hot spare information needs to be added/stored to a working array, and this can not be done if the array is in a degraded state

the hot spare option, is where a array has a "extra" drive added during the initial array creation that is unused but part of the array. and this drive will auto config (IE become active) with the failed drives data

backup your current array data, then remove the current array and recreate

http://h10032.www1.hp.com/ctg/Manual/c01232490

05-14-2019 06:57 AM

My problem is aimed directly on LSI controller, other controllers are at the moment not in my scope. As I follow Your logic, it sounds like a hot spare is obligatory for RAID 1E. Is there any instruction where it is written that I'm forced to install a hot spare on RAID 1E or my array will be lost when one drive fail? I always thought 3 disks RAID array copable to work on 2 is created to have an option to react when somthing is wrong and have both time and ability to rebuild the array. I'm confused to be honest.

Unfortunately I can not kill this array I have to rebuild it, because it is not going to work cloned to another station. Tried it and failed with it.

There is one more thing........HDD I bought has no HP firmware. It has HS09 and drive that failed has HPS2 and other two are also on HP firmware. Maybe here is a conflict?

05-14-2019 09:02 AM - edited 05-14-2019 09:10 AM

again,...raid 1E is a non standard raid-0/1 that some raid controllers support it is not raid 5 or raid 6.

one venders 1E setup may not be compatable with another vender's 1E

RAID level 1E primarily combines drive mirroring and data striping capabilities within one level. The data is striped across all the drives within the array. It provides better drive redundancy and performance than RAID level 1.

RAID 1E requires a minimum of three drives to be constructed and can support up to 16 drives. RAID 1E only allows half of the capacity of the array to be used. If any of the drives fail, the read/write operations are transferred to other operational drives within the array.

if only drive in your array has failed you should only perform drive reads (no writing) on the array untill the failed drive is replaced

RAID 1E can survive a failure of one member disk or any number of nonadjacent disks. In this case to recover the array you need to disconnect failed disk(s), connect good disk(s) and start to rebuild as it is described in a controller manual.

(read the rebuild steps required for raid 1 on the LSI controller model you have, which is a 1060E as i recall)

since you appear to be unskilled in array magement i again recomend you backup data to another drive and then restore data

once the 1E array is rebuilt

the drive on your 3rd SAS controllers port appears to be the failed drive, follow the data cable from the motherboard port to the drive to locate the drive (again backup data before disconnecting a drive!!)

last raid-5 is not recomended on drives that are larger than 500/750GB used in a raid 5 configuration due to the rebuild times and the chance for a seft error on a restore operation