-

×InformationNeed Windows 11 help?Check documents on compatibility, FAQs, upgrade information and available fixes.

Windows 11 Support Center. -

-

×InformationNeed Windows 11 help?Check documents on compatibility, FAQs, upgrade information and available fixes.

Windows 11 Support Center. -

- HP Community

- Desktops

- Business PCs, Workstations and Point of Sale Systems

- NVIDIA GeForce GTX 1080 Ti and RTX 2080 Ti in Z820, Windows ...

Create an account on the HP Community to personalize your profile and ask a question

01-30-2019 08:10 PM

Hi, I'm thinking of upgrading the GPU in my Z820 used for Video production (Resolve, Premiere, etc.). I have a Z820 that I purchased in 2012 with a single Xeon E5-2670 2.60 20MB, 1600 8C , 32 GB RAM, in a 1125W chassis, and an NVIDIA Quadro 4000, running Windows 7 Ultimate (x64). I'm considering upgrade to either the NVIDIA GTX 1080 Ti (if I can find one) or the RTX 2080 Ti to replace the Q4000. I would llike to know if anyone is running either of these cards in their Z820s and if they've had any compatibility or fit issues.

Also, in the 2012 Quick Specs, the only option for 32GB was this option:

32GB DDR3-1600 ECC Load Reduced (LR) RAM A2Z53AA

and my receipt lists the following:

32GB DDR3-1600 (4x8GB) 1CPU Registered RAM (Supported only with Single Processor.)

In the Quick Specs, the following also appears:

NOTE: You cannot intermix registered and unbuffered DIMMs. The system will not work.

NOTE: You cannot intermix LR DIMMs with either registered or unbuffered DIMMs. The

system will not work.

I'm confused because it also lists the memory as LR, but the receipt lists it as Registered. Does anyone know if LR memory is in fact Registered (just a special class), even if it can't be mixed with non-LR Registered DIMMs? I just want to have verification of what kind of RAM I have installed (4x8GB DIMMs) in case I need to upgrade.

Any input, advice, or comment would be appreciated.

Cheers,

Shane

01-31-2019

07:43 AM

- last edited on

02-05-2019

12:33 PM

by

![]() WanderP

WanderP

[edit]

using a older outdated quick specs sheet is not recomended try looking at the april 2015 quickspecs sheet (revision 48)

http://www8.hp.com/h20195/v2/GetPDF.aspx/c04111526.pdf

also there is nothing preventing you from running 1600mhz ram in both cpu memory bamks as long as you stay withing the listed memory limits

last, if you use this system for a living you might want to stay with the HP/software venders hardware recomendations

however if your current system software does not use quadro features, then a non quadro might be worthwhile, check in the user forums of the software apps you use and see what the users there say.

last,... windows 10 is the future whether you like it or not,.... you might want to consider planing for a OS upgrade now

the 1070/80 cards work fine in a z820, however you may need a 1250 watt power supply depending on final system configuration instead of the stock 850 watt unit

01-31-2019 04:09 PM - edited 01-31-2019 09:17 PM

Shane,

Shane, firstly it seems possible that an RTX might work in a z820 first version system, but the reference I like to check for reports of components use, Passmark, does not show any baselines for any version of RTX in a z820. The z820 with the highest 2D score uses 2X E5-2687w v2, a GTX 1080 Ti, Samsung XP941. The CPU score is 23337 and 3D is 11312. That 3D score is below average for a GTX 1080 Ti , which in Passmark = 14916. For CPU comparison, the highest CPU mark for a single E5-2670 is 13580.

Upgrading a system is a consideration of a balance of performance between the components and in my view a GTX 1080 Ti and RTX 2080 Ti are disproportionate to a Xeon E5-2670- based system. The E5-2670 is 2.6 / 3.3GHz. Either of the GPU's named would be throttled by the CPU's relatively low clock speeds. Those cards though could provide excellent CUDA compute, but in Premiere certainly the CPU core count will be more important than clock speed and the GPU is hardly employed. However, Resolve has a quite differnt component emphasis. As opposed to the core count, one set of Resolve work likes core likes the highest possible clock speed while color grading likes a lot cores:

https://www.pugetsystems.com/recommended/Recommended-Systems-for-DaVinci-Resolve-187.

If the upgrade budget is up to $1,300, consider that cost used in a different proportion.

If the system is to eventually have two processors, it would present all manner of advantages to replace the motherboard supporting E5 v2. The E5-2600 v2's have higher clock speeds and up to 12 cores instead of 8 of the first verison,use 1866 imnstead of 1600 RAM, and (I think) more PCIe3.

The first priority is the CPU. If this system is to be used permanently with a single CPU only, it may be better to sell the z820 and buy a z620 with an E5- v2 motherboard (2013 boot block date. Dual CPU systems are never quite as fast as single CPU systems as the dual processors have to run through a thread distribution and multiple processot syncronization parity check.

The next step is to determine if the single-thread performance which is a function of the top Turbo clock speed - 3D CAD, or the core count for data processes or CPU rendering is the application priority. If it's single-threaded speed, I'd suggest an E5-1650 v2 or E5-1660 v2 6-core or an E5-1680 v2 8-core. All of those three may be overclocked using the Intel Extreme Tuning Utility. If there is to eventually be two CPU's, my choice would be E5-2667 v2, which is 8C@3.3 /4.0GHz having a goo8-cores and a good single thread speed as well- an all-rounder. The 2667v2 is also less costly than the E5-2687w v2 3.4/4.0GHz.. I use an E5-1680 v2 (rated @ 3.0/3.8GHz) with all eight cores overclocked to 4.3Ghz and that is a good balance between a quite healthy single-thread (2373) and multi-threaded performance. For comparison the average single thread mark for an E5-2670 is 1571. The overclocking is accomplished by using a z420 liquid cooler in the z620 to prevent thermal throttling. It simply plugs in and works. If the core count is the priority, then consider 2X E5-2680 v2 or 2X E5-2690 v2 10-cores , both of which have repsectable 3.6GHz Turbo speeds. The E5-2680 v2 is the better value and only means a 2.8GHz base instead of 3.0. However, tests using Premiere Pro report that there is no benefit using a CPU with more than 10-cores:

https://www.pugetsystems.com/labs/articles/Adobe-Premiere-Pro-CC-Multi-Core-Performance-698/

In dual system, that needs both high core count and high clock speed, consider 2X E5-2667 v2, which is 8C@3.3 /4.0GHz and quite a bit less costly than the E5-2687w v2 3.4/4.0GHz.

The E5-v2's use DDR3-1866 ECC and if there are dual Processors, registered must be used. I use ECC registered with the single E5-1680 v2 but it is slightly slower- the 1-bit parity check than unbuffered. For a one CPU system , I consider 32GB a minimum today and for a dual CPU- 64GB- and especially with video editing /encoding on a single or dual processor. For heavy simulation work- 128GB. I use 64GB as I 've had renderings that required 37+GB during setup.

A z820 v2 motherboard appears to be reasonable these days (2.19). There are also quite number of z620's with an E5-1650 v2 for quite a small amount of money, and som with the E5-1660 v2 6C@3.7/4.0GHz and an all-core clock of 3.9GHz. The processors mention vary in cost for an E5-1650 v2 to $250 for an E5-2690 v2, and a bit more for an E5-1680 v2. In any of these, there is still quite a bit left in the hypothetical $1,300 budget for memory: 64GB of PC3-14900R , drives- a Samsung 860 EVO 500 GB, and a good GPU, perhaps used GTX 1080 Ti or new RTX 2070 for $550 or possibly a GTX 1070 Ti- whihc is very good. In my z620 the 1070 Ti has a Passmark 3D of 12648- compare to the 11312 top 3D for a GTX 1080 Ti in a z820. That's the advantage of being to overclock the E5-1680 v2. And RTX 2070 has performance above GTX 1080 -not the Ti and adds real-time ray tracing for the future software will take advantage of it.

Summary: The first thing is to establish what kind of system is best for Premiere and Resolve- single or dual CPU, core count or clock speed priority- and I 'm not entirely sure, but do beleive that the E5-2670 will not be ideal for either single-threaded or multithreaded work, regardless of single or dual CPU. In my view, an E5-v2 motherboard is the most logical basis for longer term investment, and until the CPU, memory, and disk performance is at a quite high level, a very high-end GPU is somewhat wasted. Of ourse, there may well be certine asepct of for example graphic desing where the CPU comesin to it's own, but between Premiere and Resolve, it appears the central focus is primarily the CPU with memory and drives following.

The planned upgrade is a complex but interesting equation.

Sorry for such a long ramble.

BambiBoom

01-31-2019

08:14 PM

- last edited on

01-31-2019

10:45 PM

by

![]() Cheron-Z

Cheron-Z

Dear DGroves,

I use forums almost daily for a variety of interests, to both give and receive. I typically ignore pompous, snarky responses from [edit] like you, but this one just wouldn't let me be. So, I'd like to respond to your post properly:

"are there bad keys on your keyboard that prevent you from googling "LR ram" yourself" i suspect doing so would have answer all your questions related to "Load Reduced RAM Modules)"

No, my keyboard works perfectly, unlike yours since, apparently, your Shift key doesn't work, and neither does your spell checker. So...

"using a older outdated quick specs sheet is not recomended try looking at the april 2015 quickspecs sheet (revision 48)"

If you had actually read (and comprehended) my post, you would have connected my use of a 2012 Quick Spec with the fact that I purchased my Z820 in that year, a few months after the version I reference. Which, incidentally, was the exact one I used when specing the workstation originally. As you can now hopefully see, a 2015 Quick Spec would not have been relevant for the information I was requesting. I specifically 'Googled' HP Quick Spec to find the one I needed so, yes, I also know how to use Google.

Also, related to the memory question, you should have seen that I was asking for clarification on the HP Quick Specs notations and those found on my receipt, which differed in their description of the DIMMs installed in my Z820, LR or RDIMMs. Principally, "I just want to have verification of what kind of RAM I have installed (4x8GB DIMMs) in case I need to upgrade."

"however if your current system software does not use quadro features, then a non quadro might be worthwhile, check in the user forums of the software apps you use and see what the users there say."

If you read my post again, you'll probably infer that I've already done that (on multiple forums, including the Resolve forum), and is exactly why I'm posting here "to know if anyone is running either of these cards in their Z820s ", to quote my OP. Since this is an HP forum, I assumed that there would be users on here who use, um, a Z820. Also, you should have known that programs like Resolve and Premiere (which I listed) both take advantage of CUDA cores, which both the Quadro and GeForce lines possess. The very reason I am looking to upgrade my GPU is that the Quadro 4000 is pre-Kepler, which Resolve 15 doesn't support (it has to be Kepler or higher, i.e., Maxwel, Pascal, etc.)

"last,... windows 10 is the future whether you like it or not,.... you might want to consider planing for a OS upgrade now"

At least you didn't tell me to go out and buy a Mac... Dear sir, I've been in IT for over 30 years, most of that time as a Scientist/SW Engineer developing complex computer simulations of advanced weapons systems, then as a Div. Mgr for a Fortune 100 company managing millions in IT Outsourcing contracts for other Fortune 100 companies (datacenters, desktops, helpdesk, etc.). I have multiple systems running Windows 7, Windows 10, SQL Server, and a couple of MS Server versions, and use what I need, where I need it. I even have a few machines still running XP, and one even running Windows 2000. Your assertion that I am a Win 7 holdout, or don't like Win 10 is absurd, and condescending.

"the 1070/80 cards work fine in a z820, however you may need a 1250 watt power supply depending on final system configuration instead of the stock 850 watt unit"

Yeah, I know they work but, again, if you had taken the time to actually read my post before you started fingering your keyboard, you would have seen that I wanted "to know if anyone is running either of these cards in their Z820s and if they've had any compatibility or fit issues," which is a different question than the one you attempted to answer. Also, you would have seen that in my OP I stated that I had a 1125W chassis. As you may know, every company manufactures their GPUs differently, even if they are functionally the same cards (e.g., GeForce GTX 1080 Ti). Some have a single fan, some have two, and some have three. Some won't fit in the Z820 due to the existing fan shroud, unless they vent out the back instead of into the case, and some are more than two slots wide. I was looking for actual experience from those using the cards listed in my OP in their Z820s, in a similar configuration as mine (as specified). That's more than just the "cards work fine in a z820".

I do thank you for taking the time to comment, though, and for wasting mine.

Cheers,

Griz

01-31-2019 08:32 PM

BambiBoomZ,

I really appreciate you taking the time to provide such a detailed response and analysis, but you misunderstood my post and request for information. My workstation is very specific to my needs and used for video/film editing/color grading and compositing. I'm not looking to upgrade the machine, just the GPU, and I am considering the two options listed. I have, from other users, particularly from Black Magic Design themselves and from their user forums, that even the 1080 Ti would completely trounce the (circa 2010) Quadro 4000 I currently run, and since Resolve (and most editing programs) rely more on CUDA cores (or the GPU in general) in preference to the CPU, it is by far the biggest driver in improving my performance.

Agreed, I would love to have a new i9 system with 128 GB of RAM and give up the Xeon, but it's not really necessary for my needs (at this point), nor would I see significant improvement in performance from such with the applications I'm using it for. I bought the Z820 for its build quality and stability, and that's why I'm being so cautious moving away from HP's recommended Quadro line, even though the GeForce line surpasses it (for video processing) in every way, and even though other users have confirmed they are, indeed, currently using them successfully. A rep from BMD (makers of Resolve) uses the 1080 Ti in a Z420 with exactly the same processor I have in my Z820, and it will play footage smoothly that mine stutters on. That points to my aging Quadro 4000.

I disagree with your assessment of the E5-2670's single/multi-threat throughput. It may be showing its age, but it screams, and chews up pretty much anything I throw at it, whether it be a single thread app, or one using all 16 cores in parallel (like Resolve does). My only problem now is that Resolve can't use my CUDA cores, so it is trying to decode H.264 video on the fly at 60 fps using the CPU. Not good. That's why I need a new GPU.

Thanks again.

Cheers,

Griz

02-01-2019 06:02 AM - edited 02-01-2019 08:15 AM

Griz,

Yes, I should have stated the intentions of the earlier reply more clearly. Here's another go:

On Passmark baselines, of the 21,000+ Hewlett Packard systems tested, there are 0 tested using the RTX 2080 Ti and 129 using the GTX 1080 Ti . There are only 19 of 21,000+ HP systems using an RTX of any model. That does not state that an RTX 2080 Ti is impossible in a first series z820, but it also means spending $1,200 on a GPU will be somthing of a pioneering experiment.

The more demanding application in the subject system is Resolve. although if you're running complex systems simulations that could be even more demanding.

Regardless of the GPU choice, the most demanding application in the subject, Resolve /Fusion makes high perfromance demands in: single-thread perofrmance (Fusion) and high core count in color grading. The link to the Puget Systems tests and the focus of separate configurations for Resolve and Fusion make this clear: the Fusion systems is based on an i9-9900K- having the highest single-thread performance of any CPU at 2897 while the "Color Grading Workstation" has a base processor of an i9-9920X 12C@4.2 / 4.5GHz, a $1,650 CPU with a Passmark single thread rating of 2529. That is in the top echelon of single thread performance and remarkable for a 12-core.

The signal from that specification is that the ideal Resolve system needs both a lot of cores and high clock speed- an expensive combiantion, but at least more common now than previously. In my view, the E5-2670, having a Turbo speed of 3.3GHz with a CPU mark of 12138 and Passmark average single thread mark of 1571 is going to bottleneck a high-end GPU.

It has always surprised me that the 3D performance of the z820 in general is not higher. There are 526, z820's tested on Passmark and the highest 3D mark is 11312 using a GTX 1080 Ti. that system is running a pair of E5-2687w v2's which have an average single thread of 2038 where the average CPU mark for the 1080 Ti is 14197. Compare the top 3D of the z420 of 14061 on E5-1660 v2 / GTX 1080 Ti and on z620, 14650 on E5-1680 v2 / GTX 1080 Ti. On a z620 with 2X E5-2670, a GTX 1080 Ti can make 8957 where the z820 top mark on a z820 / GTX 1080 Ti is 7707, or -16%. I use a z620 with a GTX 1070 Ti, having a 3D of 12629 (average = 12300) or +11% to the best GTX 1080 Ti in a z820.

It's a non-linear ratio, but consider that the E5-2687w v2- having the highest clock speed of any Xeon E5, produces a 2038 single thread on a CPU average of 16484, the top 3D is only 11312 in a z820/ GTX 1080 Ti. For comparison, the highest 3D mark for a z820 dual E5-2670 system with a GTX 1080 Ti is 7707. That 7707 is 47% of the average for a GTX 1080 Ti and only 68% of the highest performance on a pair of that highest clock E5 -2600's, the E5-2687w v2's.

These are the reasons that, in my view, RTX 2080 Ti compatibility and performance is not confirmed in a z820 use and the GTX 1080 Ti, even with 2X 4.0GHz Turbo E5-2687w v2's performs well below it's average capability. If a 1571 single thread results from an average 12138 E5-2670 CPU rating, the top rated single E5-2670 system, with the top CPU mark of 13444 will result in a single thread of about 1746. Contrast that with the Puget systems Resolve system base CPU single thread marks of 2897 and 2529.

Of course, if the E5-2670 provides satisfactory processing power, by all means retain it- the CPU marks are very good, but be aware that a GTX 1080 Ti or RTX 2080 Ti will lose a noticeable proportion of their capabilites as the CUDA compute power is dependent on the CPU clock. Until the current generation of Skylake X, Ryzen and Threadripper, and Xeon W-2100, the combination of a lot of cores with a high Turbo clock was rare and expensive. A Xeon w-2145 8-core has an average single thread of 2529 and an AMD Threadripper 2820X 12C@3.3 /4.5GHz ($650) has a CPU average of 21432 (top =24412) and single thread of 2223. A Threadripper 2820X with an RTX 2080 Ti has a 3D mark of 16625. For comparison, the

Obviously, specding money and time on a new system or substabntial upgrades canl always improve the performance, the question is to whether significant investment in the current system will have a satisfactory cost /benefit.

In summary, suggestions are based on optimization within the context of investment in a system with limited potential.

_It would be interesting to know how the current E5-2670 system performs on Passmark. Passmark offers a free, full-function 30-day trial and I've found that very useful fro comparison and to guage improvements. In your applications Cinebench R15 is also relevant and informative.

BambiBoomZ

HP z620_2 (2017) (R7) > Xeon E5-1680 v2 (8-core@ 4.3GHz) / z420 Liquid Cooling / 64GB DDR3-1866 ECC Reg / Quadro P2000 5GB _ GTX 1070 Ti 8GB / HP Z Turbo Drive M.2 256GB AHCI + Samsung 970 EVO M.2 NVMe 500GB + HGST 7K6000 4TB / Focusrite Scarlett 2i4 sound interface + 2X Mackie MR824 / 825W PSU /> HP OEM Windows 7 Prof.’l 64-bit > 2X Dell Ultrasharp U2715H (2560 X 1440)

[ Passmark Rating = 6280 / CPU rating = 17178 / 2D = 819 / 3D= 12629 / Mem = 3002 / Disk = 13751 / Single Thread Mark = 2368 [10.23.18]

[Cinebench: OpenGL= 134.68 fps / CPU= 1234 cb [10.27.18]

02-13-2019 07:40 PM

BambiBoomZ,

Sorry for the late response. Thanks again for the detailed and informative response. I really appreciate it. I've done quite a bit more research since your response, and have done some benchmarking using things like UserBenchMark, Cinebench, and RealBench. I did not download the Passmark, but may give that a try. My build came in a little higher than average among comparibles, but in every case, my GPU was a significant disappointment (Quadro 4000), as expected.

I have purchased an EVGA GTX 2080 Ti XC which I will be installing next week, and will report back how it performs (or IF). Based upon feedback from folks on the Resolve forum, it apperas that even with a GTX 1070 maxed out (100% GPU utilization) on a machine with an i7, the CPU was idling at less than 20%. I've heard over and over the Resolve is heavily GPU focused, so I'm hoping that my CPU will not bottleneck the 2080 too much, if at all, in Resolve. I'm sure my 3D gaming at 60 fps would suffer, though.

I noticed in the benchmarks a lot of focus on 'single core/thread' performance in all of the multi-core systems. Why is that? I understand that even if you have 8 cores with HT (16 cores), that each will still be limited to the single core speed, but if an application is written to take advantage of multiple cores, shouldn't that be the focus of the comparisons? That's the whole point of parallel processing, right?

02-14-2019 04:49 AM - edited 02-14-2019 07:36 AM

GrizzlyAK,

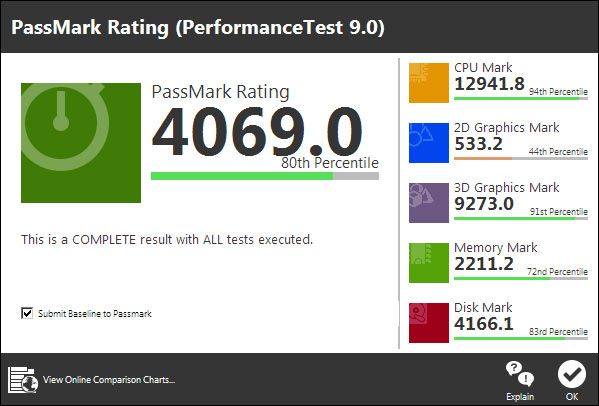

Yes, I'd be very interested to see the RTX 2080 Ti results in a z820. What were the results of the Cinebench? Since writing last time, I see on Passmark baselines that there is now a z820 using an 2X E5-2670 / 64GB / SanDisk SSD / RTX 2060. That system on Passmark:

Rating = 3963 CPU= 18813 2D=477 3D=7272 Memory=2211 Disk=3840

For comparison, the average Passmark 3D for RTX 2060 = 13144

The highest 3D mark for a z820 / 2X E5-2670 is 8134, using a GTX 980 (!). The average 3D of the GTX 980 = 9628. The RTX 2060 2D = 477 is also a concern. In the office z620, the 2D= 819 of the GTX 1070 Ti in Corel Technical Designer is only "adequate" and the 3D = 12629 navigation speed in large Sketchup 3D models with a lot of layers and textures is "inadequate."

In summary, using the RTX 2060 as an indicator, it appears that a z820 /2X E5-2670 may extract about 55% of the potential of the RTX 2060, whereas it may achieve about 82% of the average GTX 980. The highest 3D mark for a GTX 1080 Ti in az820/ @X E5-2670 is 7707, as compared to the Passmark World average of 14203. These results- the GTX 980 Ti reporting better results than GTX 1080 Ti and both obsolete GPUS outperformaing an RTX 2060. Of course, the 2060 is only one sample and as far as I know, the 2060 does not have the ray-tracing components of the RTX 2070, 2080, and 2080 Ti. This is mystifying and as mentioned, as has the variability of the graphics performance of the z820 for some time.

As 3D performance is affected by clock speed, it may well be the E5-2670's 3.3GHz maximum Turbo Speed that is the limiting factor. In a z820 with 2X E5-2687W v2, which have a 4.0GHz Turbo, the 3D score for GTX 1080 Ti = 11312 - still well below averge, but the GTX 1080 Ti has been used in many, many gaming systems systems running at 4.5-5.2GHz which must shift the average considerably.

As mentioned in the previous reply, Resolve appears to be an application that is very demanding on both core count, or rather a a high calculation density of CPU clock cycles per unit time and a high single-thread performance. See the Puget Systems specifications for Resolve- focused systems. Current CPU designs have improved significantly in having a lot of cores and high Turbo speeds, but it appears to me that the z820, even using the highest clock speed Xeon (E5-2687W v2 8C@3.4/4.0GHz) can not achieve full performance from high end GPU's.

If it's convenient, seeing the Passmark results for the z820 in it's current state would be informative to the conversation.

BambiBoomZ

03-08-2019 09:01 PM

Reporting back. The GTX 2080Ti works perfectly in the Z820 under Windows 7 Ultimate x64. I've had no problems at all since installation and over the last couple of weeks of use. It also solved my issues with DaVinci Resolve stuttering on 60fps H.264 video wth the Quadro 4000. I've included the latest Passmark below.

My 2D is low, brought down by dismal Direct 2D performance. Also, my single Thread was the lowest score on the CPU mark. I'm sure my CPU is throttling the GPU somewhere, but it does solve the problem I was facing with video editing (so far). So, I'm happy.